This post is intended to help the student who is willing to put time and effort into succeeding in a college calculus class.

Part One: How to Study

The first thing to remember is that most students will have to study outside of class in order to learn the material. There are those who pick things up right away, but these students tend to be the rare exception.

Think of it this way: suppose you want to learn to play the piano. A teacher can help show you how to play it and provide a practice schedule. But you won’t be any good if you don’t practice.

Suppose you want to run a marathon. A coach can help you with running form, provide workout schedules and provide feedback. But if you don’t run those workouts, you won’t build up the necessary speed and endurance for success.

The same principle applies for college mathematics classes; you really learn the material when you study it and do the homework exercises.

Here are some specific tips on how to study:

1. It is optimal if you can spend a few minutes scanning the text for the upcoming lesson. If you do this, you’ll be alert for the new concepts as they are presented and the concepts might sink in quicker.

2. There is some research that indicates:

a. It is better to have several shorter study sessions rather than one long one and

b. There is an optimal time delay between study sessions and the associated lecture.

Look at it this way: if you wait too long after the lesson to study it, you would have forgotten much of what was presented. If you study right away, then you really have, in essence, a longer class room session. It is probably best to hit the material right when the initial memory starts to fade; this time interval will vary from individual to individual. For more on this and for more on learning for long term recall, see this article.

3. Learn the basic derivative formulas inside and out; that is, know what the derivatives of functions like  are on sight; you shouldn’t have to think about them. The same goes for the basic trig identities such as

are on sight; you shouldn’t have to think about them. The same goes for the basic trig identities such as  and

and

Why is this? The reason is that much of calculus (though not all!) boils down to pattern recognition.

For example, suppose you need to calculate:

If you don’t know your differentiation formulas, this problem is all but impossible. On the other hand, if you do know your differentiation formulas, then you’ll immediately recognize the  and it’s derivative

and it’s derivative  and you’ll see that this problem is really the very easy problem

and you’ll see that this problem is really the very easy problem  .

.

But this all starts with having “automatic” knowledge of the derivative formulas.

Note: this learning is something your professor or TA cannot do for you!

4. Be sure to do some study problems with your notes and your book closed. If you keep flipping to your notes and book to do the homework problems, you won’t be ready for the exams. You have to kick up the training wheels.

Try this; the difference will surprise you. There is also evidence that forcing yourself to recall the material FROM YOUR OWN BRAIN helps you learn the material! Give yourself frequent quizzes on what you are learning.

5. When reviewing for an exam, study the problems in mixed fashion. For example, get some note cards and write problems from the various sections on them (say, some from 3.1, some from 3.2, some from 3.3, and so on), mix the cards, then try the problems. If you just review section by section, you’ll go into each problem knowing what technique to use each time right from the start. Many times, half of the battle is knowing which technique to use with each problem; that is part of the course! Do the problems in mixed order.

If you find yourself whining complaining “I don’t know where to start” it means that you don’t know the material well enough. Remember that a trained monkey can repeat specific actions; you have to be a bit better than that!

6. Read the book, S L O W L Y, with pen and paper nearby. Make sure that you work through the examples in the text and that you understand the reasons for each step.

7. For the “more theoretical” topics, know some specific examples for specific theorems. Here is what I am talking about:

a. Intermediate value theorem: recall that if  , then

, then  but there is no

but there is no  such that

such that  . Why does this not violate the intermediate value theorem?

. Why does this not violate the intermediate value theorem?

b. Mean value theorem: note also that there is no  such that

such that  . Why does this NOT violate the Mean Value Theorem?

. Why does this NOT violate the Mean Value Theorem?

c. Series: it is useful to know basic series such as those for  . It is also good to know some basic examples such as the geometric series, the divergent harmonic series

. It is also good to know some basic examples such as the geometric series, the divergent harmonic series  and the conditionally convergent series

and the conditionally convergent series  .

.

d. Limit definition of derivative: be able to work a few basic examples of the derivative via the limit definition:  and know why the derivative of

and know why the derivative of  and

and  do not exist at

do not exist at  .

.

Having some “template” example can help you master a theoretical concept.

Part II: Attitude

Your attitude will be very important.

1. Remember that your effort will be essential! Again, you can’t learn to run a marathon without getting off of the couch and making your muscles sore. Learning mathematics involves some frustration and, yes, at times, some tedium. Learning is fun OVERALL but it isn’t always fun at all times. You will encounter discomfort and unpleasantness at times.

2. Remember that winners look for ways to succeed; losers and whiners look for excuses for failure. You can always find those who will be willing to enable your underachievement. Instead, seek out those who bring out your best.

3. Success is NOT guaranteed; that is what makes success rewarding! Think of how good you’ll feel about yourself if you mastered something that seemed impossible to master at first. And yes, anyone who has achieved anything that is remotely difficult has taken some lumps and bruises along the way. You will NOT be spared these.

Remember that if you duck the calculus challenge, you are, in essence, slamming many doors of opportunity shut right from the get-go.

4. On the other hand, remember that Calculus (the first two semesters anyway) is a Freshman level class; exceptional mathematical talent is not a prerequisite for success. True, calculus is easy for some but that isn’t the point. Most reasonably intelligent people can have success, if they are willing to put forth the proper effort in the proper manner.

Just think of how good it will feel to succeed in an area that isn’t your strong suit!

but that is the subject of another post)

. Typically, we insist that the functions be, say,

and note that it is a bit of a chore to show that the convolution of two

functions is

; one proves this via the Fubini-Tonelli Theorem.

functions need not be

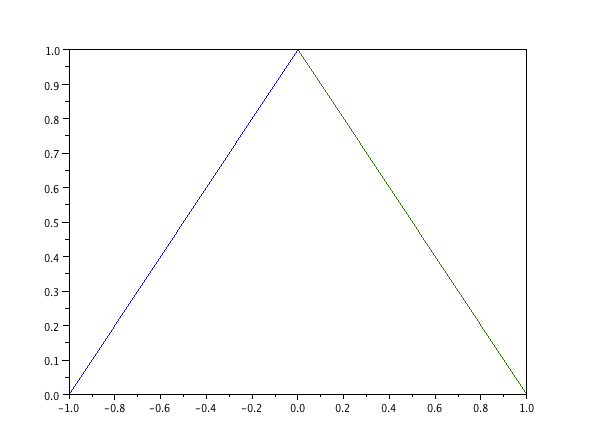

; e.g, consider

for

and zero elsewhere)

be the function that is

for

and zero elsewhere. So, what is

???

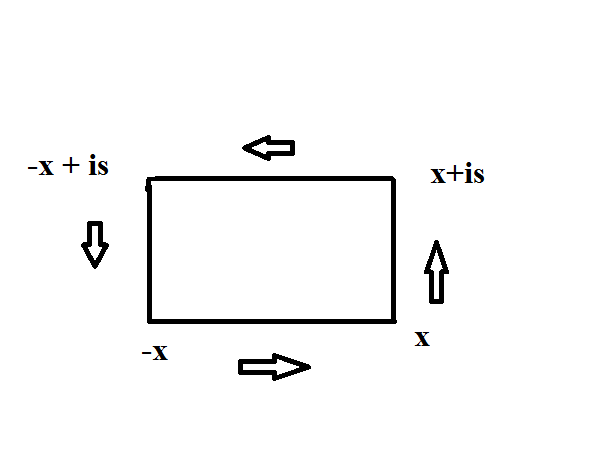

only assumes the value of

on a specific region of the

plane and is zero elsewhere; this is just like doing an iterated integral of a two variable function; at least the first step. This is why it fits well into calculus III.

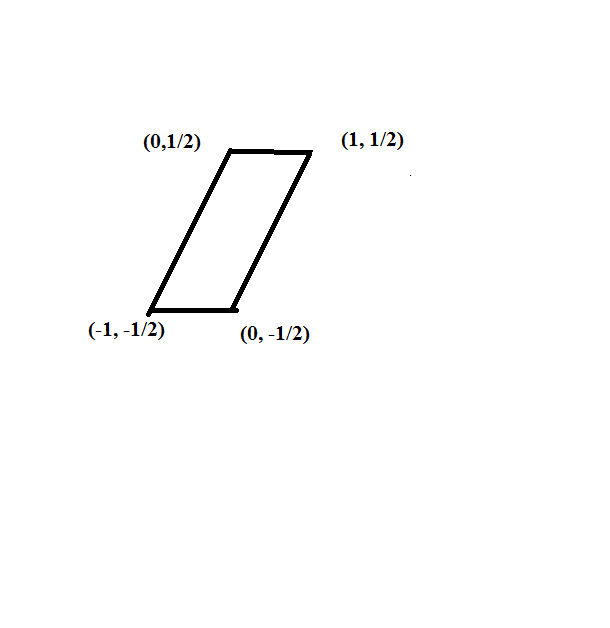

for the following region:

.

has the following description:

for

and

for

.